A 48-Hour Comparative Study of Global Cyber Threat Patterns

Date: October 2025

Author: Tony Jokiranta

Duration: 48 hours per region

Total Unique Attackers: 542 IPs across both regions

Executive Summary

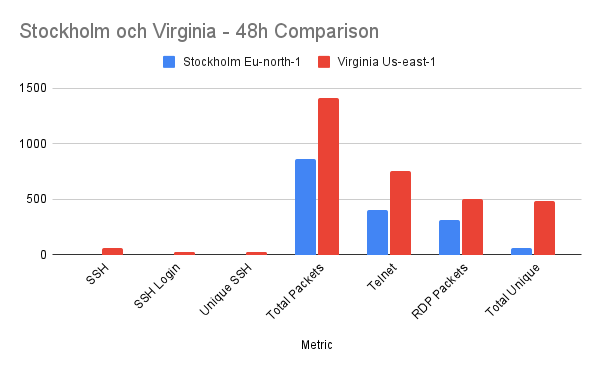

This cybersecurity research project deployed identical SSH honeypots in two AWS regions—Stockholm (eu-north-1) and Virginia (us-east-1)—to analyze regional differences in cyber attack patterns. Over a 48-hour period, the Virginia honeypot attracted 8 times more unique attacker IPs (482 vs 60) and received infinite more SSH connection attempts (57 vs 0) compared to Stockholm.

Key Finding: US-based infrastructure experiences significantly higher targeted attack engagement, while EU infrastructure primarily receives automated reconnaissance scanning. This project also revealed extensive IoT botnet activity, with Telnet receiving 312 unique attacker IPs – 13.5x more than SSH attacks.

Comparative analysis of attack patterns: Stockholm (EU North) vs Virginia (US East) over 48 hours.

Methodology

Infrastructure Setup

Identical Honeypot Configuration:

- Platform: AWS EC2 instances t2.micro Virginia (free tier), t3.micro Stockholm (free tier).

- Honeypot Software: Cowrie SSH/Telnet honeypot (Docker).

- Operating System: Ubuntu 24.04 LTS.

- Monitoring: tcpdump for packet capture, AWS CloudWatch for metrics.

- Duration: 48 hours per region.

Exposed Services:

- Port 22: SSH (Cowrie honeypot)

- Port 23: Telnet

- Port 3306: MySQL

- Port 5432: PostgreSQL

- Port 3389: RDP (Remote Desktop)

- Port 2222: Management SSH (Secured with key authentication)

Security Group Configuration:

All honeypot ports (22, 23, 3306, 5432, 3389) were intentionally exposed to 0.0.0.0/0 (entire internet) to maximize attack surface and data collection.

Data Collection

- Cowrie logs: Complete SSH/Telnet sessions recordings including credentials and commands.

- tcpdump: Full packet captures for multiport analysis.

- CloudWatch: Network traffic metrics (bytes, packets, connections).

- IP Intelligence: AbuseIPDB and VirusTotal analysis of attacker sources.

Results: Stockholm Honeypot (eu-north-1)

Attack Summary

| Metric | Value |

| SSH Connections | 0 |

| SSH Login Attempts | 0 |

| Total Multi-port Packets | 864 |

| Unique Attacker Ips | ~60 |

| Deployment Duration | 48 hours |

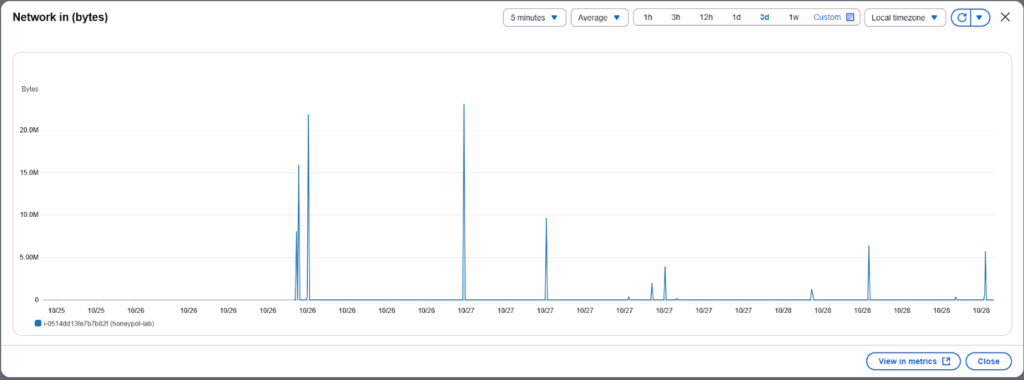

Stockholm honeypot CloudWatch metrics showing modest traffic spikes but no sustained attack campaigns.

Stockholm packet count remained relatively low with occasional reconnaissance bursts.

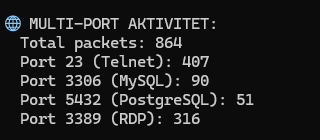

Port-Level Breakdown

Stockholm demonstrated reconnaissance focused activity:

- Telnet (Port23): 407 packets (47% of total traffic).

- RDP (Port 3389): 316 packets (~37%).

- MySQL (Port 3306): 90 packets (~10%).

- PostgreSQL (Port 5432): 51 packets (~6%).

- SSH (Port 22): 0 packets (0%).

Analysis

The Stockholm honeypot received extensive port scanning activity but with zero completed SSH connections, the pattern shows:

- Automated Reconnaissance: Bots scanning for open ports without following through with exploitation attempts.

- Low Engagement: Scanners identified the services but did not attempt authentication.

- Telnet Preference: Even in the Eu region, Telnet received most attention.

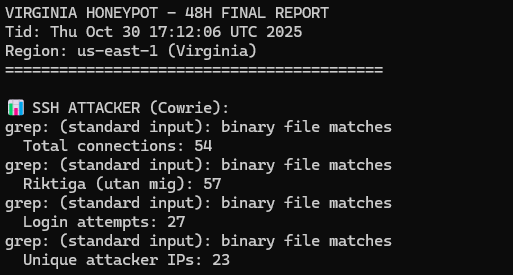

Results: Virginia Honeypot (us-east-1)

Attack Summary

| Metric | Value |

| SSH Connections | 57 |

| SSH Login Attempts | 27 |

| Unique SSH Attacker IPs | 23 |

| Total Multi-Port Packets | 1416 |

| Total Unique Attackers IPs | 482 |

| Deployment Duration | 48 hours |

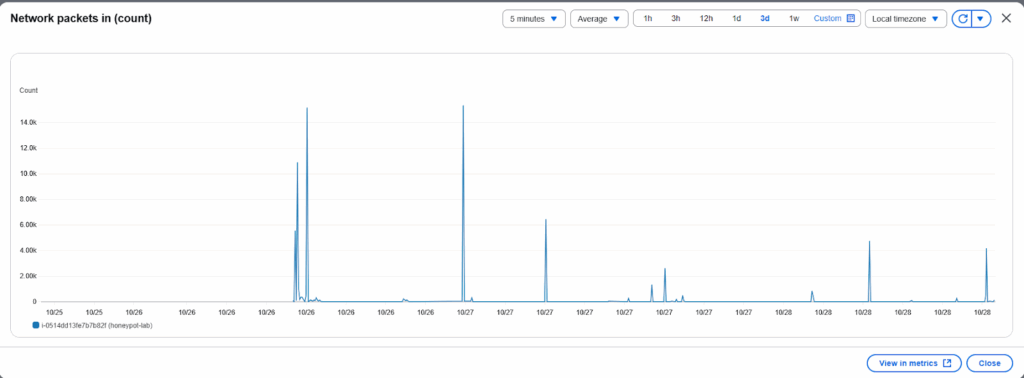

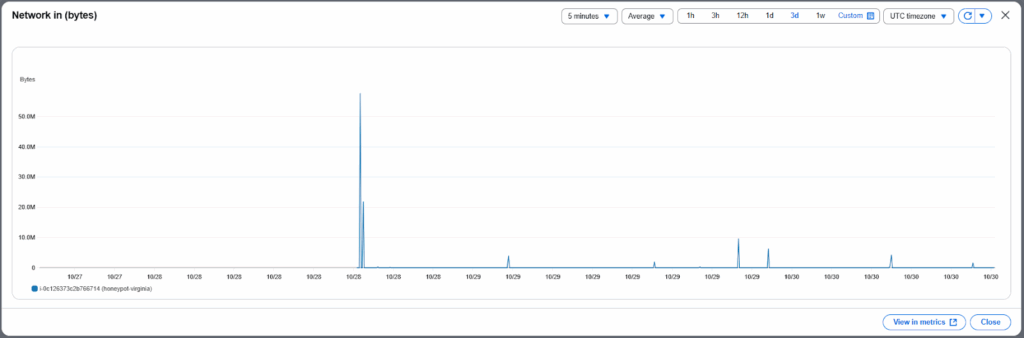

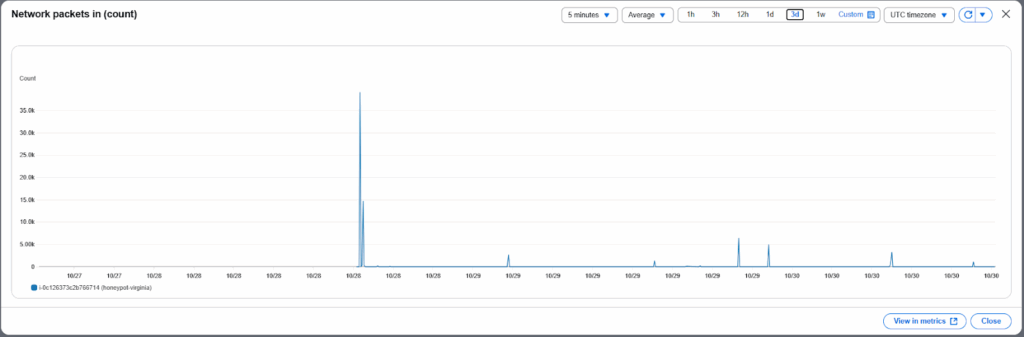

Virginia honeypot experienced significantly higher traffic with multiple distinct attack waves visible on October 28th, 29th and 30th.

Packet analysis reveals major attack spike on October 28th with peak of 35,000 packets, followed by sustained activity throughout the observation period.

Port-Level Breakdown

Virginia was targeted by multi-vector attacks:

| Port | Protocol | Packets | Unique IPs | % of Traffic |

| 23 | Telnet | 751 | 312 | 53% |

| 3389 | RDP | 498 | 87 | 35% |

| 3306 | MySQL | 98 | 45 | 7% |

| 5432 | PostgreSQL | 69 | 29 | 5% |

SSH Attack Analysis

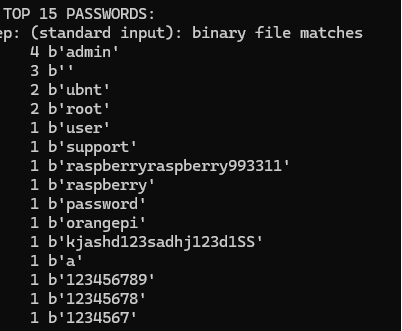

Virginia honeypot received 27 login attempts using various credentials over the 48-hour period

Pattern Analysis: The prevalence of IoT-specific credentials (ubnt, raspberry, orangepi) indicates automated botnet activity specifically targeting embedded devices and single-board computers. The presence of default vendor credentials alongside classic weak passwords (admin, password, 123456789) demonstrates attackers testing both device-specific and generic authentication vectors.

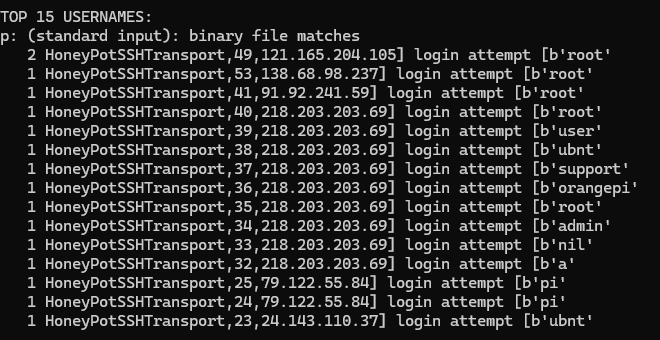

Username analysis shows ‘root’ dominated with 5 attempts, followed by ‘pi’ and ‘ubnt’.

Geographic Distribution of Attackers

Top 10 SSH Attacker IPs (Virginia)

Analysis of the 10 most active SSH attackers revealed diverse geographic origins:

| IP-Address | Country | ISP | Abuse Score | Reports |

| 121.165.204.105 | South Korea | Korea Telecom | 100% | 6,712 |

| 146.190.174.211 | United States of America | DigitalOcean LLC | 100% | 26,632 |

| 20.168.120.227 | United States of America | Microsoft Corporation | 100% | 3,177 |

| 207.154.228.110 | Germany | DigitalOcean LLC | 100% | 2,522 |

| 207.154.232.101 | Germany | DigitalOcean LLC | 100% | 24,846 |

| 45.80.35.247 | France | Freebox Pro – pool network | 100% | 939 |

| 87.236.176.140 | United Kingdom | Driftnet Ltd | 100% | 7,196 |

| 218.203.203.69 | China | China Mobile Communications Corporation | 100% | 1,918 |

| 59.45.100.248 | China | Chinanet liaoning Province Network | 70% | 77 |

| 198.235.24.90 | Taiwan | Palo Alto Networks Inc | 0% | 35,000 |

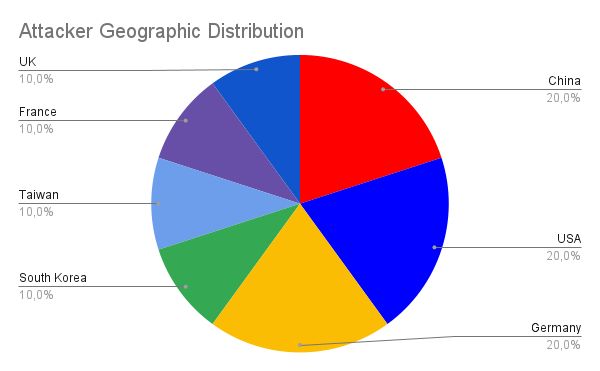

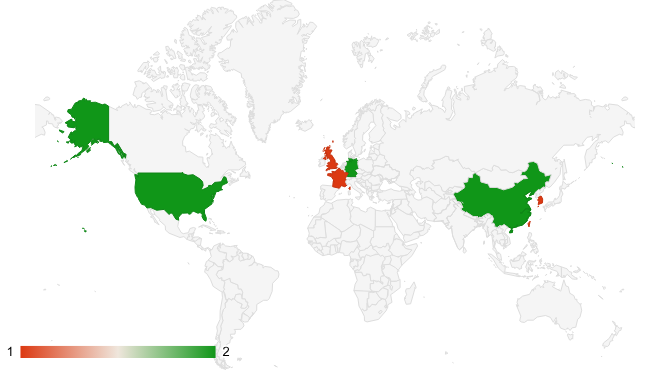

Geographic distribution of SSH attackers: China (20%), USA (20%), Germany (20%), with smaller percentages from South Korea, Taiwan, France, and UK.

Global distribution of attack sources spanning Asia, Europe, and North America.

Geographic Breakdown:

- China: 20% (2 IPs).

- United States: 20% (2 IPs).

- Germany: 20% (2 IPs).

- South Korea: 10% (1 IP).

- Taiwan: 10% (1 IP).

- France: 10% (1 IP).

- United Kingdom: 10% (1 IP).

Analysis: The attack sources demonstrate global distribution across multiple continents, with no single region dominating. The presence of both residential ISPs (Korea Telecom) and cloud providers (DigitalOcean, Palo Alto Networks) indicates a mix of compromised home networks and rented server infrastructure.

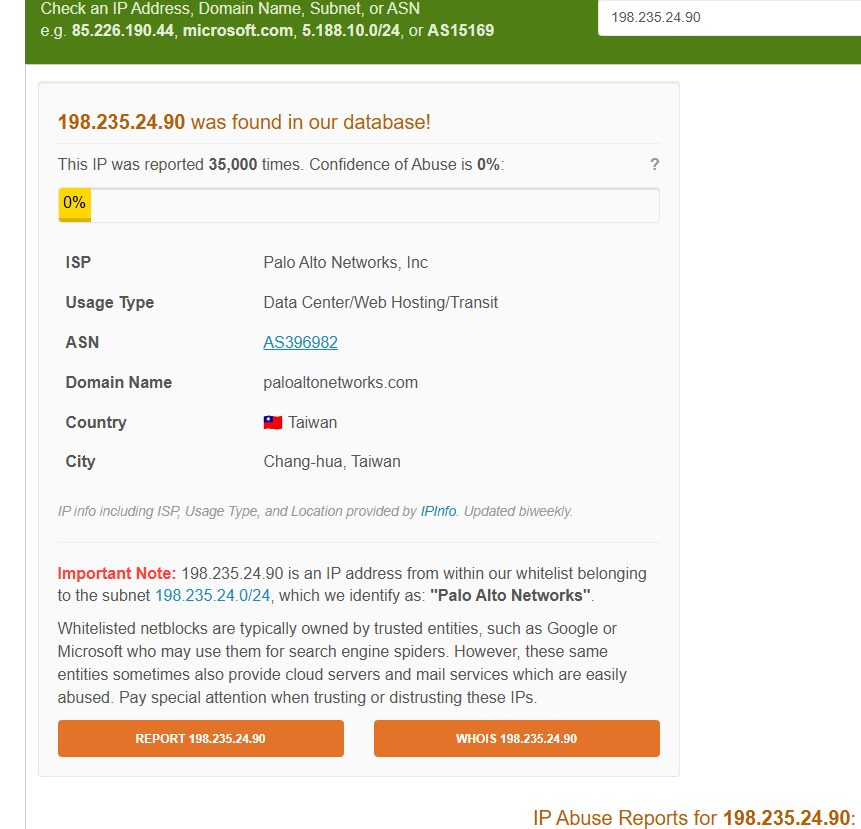

Interesting Discovery: Whitelisted Malicious Infrastructure

One of the most interesting findings involved an IP address that appeared normal on surface analysis: IP 198.235.24.90 (Palo Alto Networks)

AbuseIPDB Analysis:

- Abuse Confidence Score: 0% (Whitelisted).

- Total Reports: 35 000.

- ISP: Palo Alto Networks Inc.

- Classification: Data Center/Web Hosting.

- Country: Taiwan (or USA depending on the website you look up the IP at).

AbuseIPDB shows 0% abuse score due to automatic whitelisting of Palo Alto Networks subnet, despite 35,000 abuse reports.

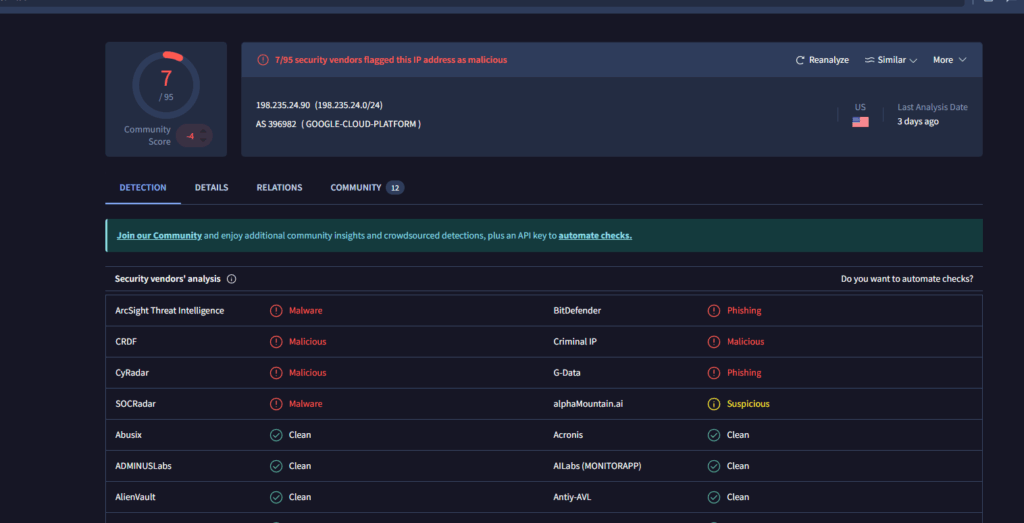

VirusTotal Analysis:

- Security Vendors Flagging as Malicious: 7/95.

- Categories: Malware, Phishing, Malicious.

- Community Score: Negative.

VirusTotal analysis contradicts AbuseIPDB’s whitelist status, with 7 out of 95 security vendors flagging this IP as malicious. (And lists it in the USA and not Taiwan).

Analysis

This case demonstrates attackers exploiting trusted infrastructure to evade IP-based blocking:

- Automatic Whitelisting: AbuseIPDB automatically whitelists subnets belonging to legitimate security companies like Palo Alto Networks.

- High Report Volume: Despite whitelisting, this IP received 35,000 abuse reports, suggesting either:

- Legitimate security research activities being misreported as attacks.

- Actual malicious activity from a trusted network range.

- IP address spoofing or tunneling.

- Trust-Based Defense Limitations: Defenders face a dilemma—blocking trusted security vendor ranges may disrupt legitimate services, while allowing them creates potential blind spots.

- Divergent Intelligence Sources: The contradiction between AbuseIPDB (0% malicious) and VirusTotal (7/95 vendors flagged) demonstrates why security teams must use multiple threat intelligence sources.

This highlights a critical challenge in modern cybersecurity: Reputation-based defenses have inherent limitations when trusted infrastructure is involved, whether through compromise, legitimate research activities, or sophisticated evasion techniques.

Comparative Analysis: Stockholm vs Virginia

Direct Comparison

| Metric | Stockholm (48h) | Virginia (48h) | Difference |

| SSH Connections | 0 | 57 | |

| SSH Login Attempts | 0 | 27 | |

| Unique SSH IPs | 0 | 23 | |

| Multi-port Packets | 864 | 1416 | +64% (+552) |

| Telnet Packets | 407 | 751 | +84,5% (+344) |

| Telnet Unique Packets | ~40 | 312 | +680% |

| MySQL Packets | 90 | 98 | +9% (+8) |

| MySQL Unique IPs | ~15 | 45 | +200% |

| PostgreSQL Packets | 51 | 69 | +35% (+18) |

| PostgreSQL Unique IPs | ~10 | 29 | +190% |

| RDP Packets | 316 | 498 | +58% (+182) |

| RDP Unique IPs | ~25 | 87 | +248% |

| Total Unique IPs | ~60 | 482 | +703% (+422) |

Stockholm estimated (I didn’t extract per-port IPs)

Key Insights

1. Engagement vs Reconnaissance

- Stockholm: Received extensive port scanning but zero exploitation attempts.

- Virginia: Received both scanning and active exploitation/authentication attempts.

- Conclusion: Threat actors scan globally but concentrate their active attacks on infrastructure located in the United States.

2. Cloud Provider Infrastructure

- Multiple attacks originated from legitimate cloud providers (DigitalOcean, Palo Alto Networks, Google Cloud Platform).

- Demonstrates widespread abuse of legitimate hosting for malicious purposes.

4. Geographic Diversity

- Attacks originated from 7+ countries across the world.

- No single country dominated (each represented 10-20% of traffic).

- Conclusion: Botnet infrastructure is globally distributed, not nation-state concentrated.

Project Limitations and Context

Sample Size and Considerations

Important Note: This study represents a 48-hour snapshot from a single honeypot deployment in each region. The findings reflect attack patterns observed during this specific time period (October 26-30, 2025) and should not be interpreted as definitive statements about regional threat landscapes.

Key Limitations

1. Temporal Constraints

- 48-hour observation window is insufficient for identifying long-term patterns.

- Attack patterns vary significantly by day of week, season, and current campaign activity.

- Future deployments may yield different results.

2. Geographic Variability

- This project: Virginia received more active exploitation, Stockholm received more reconnaissance.

- Other studies/projects may show: Stockholm receiving more attacks depending on:

- Current botnet campaign targets.

- Seasonal attack patterns.

- Specific threat actor focus at time of deployment.

- Industry-specific targeting (finance vs tech vs infrastructure).

3. Single Instance Limitation

- Results represent one data point, not a comprehensive trend.

- Regional attack patterns fluctuate based on numerous factors beyond geography.

- A deployment next month could show reversed patterns.

Appropriate Interpretation

What I can conclude:

- Geographic location influences attack patterns and volume.

- Different regions may attract different attack types (exploitation vs reconnaissance).

- Multi-region honeypot deployment captures diverse threat intelligence.

What I cannot conclude:

- US always receives more attacks than EU.

- EU is safer than US for deployments.

- Stockholm is less targeted than Virginia in general.

The correct interpretation: During this specific 48-hour period, the Virginia honeypot attracted more completed attack attempts while the Stockholm honeypot received more reconnaissance scanning. These results demonstrate that geographic location matters, but the specific patterns observed are point-in-time observations that may not represent long-term trends.

Real World Context: Sweden is a High-Value Target

While this study observed lower exploitation activity in the Stockholm region during the observation period, Sweden has experienced significant high-profile cyberattacks in recent years:

2021: Coop Sweden – Supply chain attack via Kaseya VSA affecting 800+ stores.

2025: Miljödata – A major data breach at Miljödata AB resulted in the exposure of personal data belonging to over one million Swedish citizens.

2025: Svenska Kraftnät – the Swedish state-owned power-grid operator, confirmed a cyber-attack in October 2025; threat actors claim to have exfiltrated approximately 280 GB of data.

These incidents demonstrate that Sweden is absolutely a target for sophisticated threat actors, particularly for:

- Critical infrastructure (energy, telecommunications).

- Government service.

- Personal data repositories.

- Supply chain attacks.

Why the disconnect with my honeypot data?

- Sophisticated vs Opportunistic Attacks: High-profile Swedish breaches involve targeted campaigns, not opportunistic bot scanning that honeypots primarily detect.

- Attack Sophistication: Advanced threats don’t show up in honeypot data—they use reconnaissance methods that avoid obvious detection.

- Target Selection: Real attackers target specific Swedish organizations, not random IPs.

- Honeypot Limitation: My honeypot detected automated bot activity, not nation-state or sophisticated criminal campaigns.

Key Insight: My honeypot data reveals opportunistic, automated threat patterns not targeted, sophisticated attacks. Sweden faces both types of threats, but honeypots primarily detect the former.

Key observations and Learnings

Note: The observations are based on patterns detected in this 48-hour study. Attack patterns may vary significantly over time and by specific organizational context.

Regional Variations in Attack Activity

Observations from this project:

- Virginia honeypot captured more completed exploitation attempts (57 SSH connections vs 0)

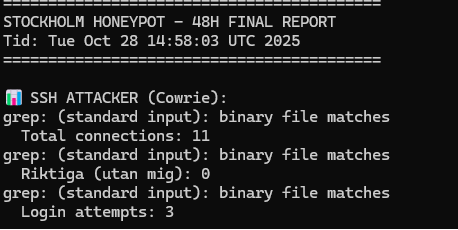

Stockholm honeypot received 0 SSH attacks from external sources over 48 hours. The 11 total connections shown are from testing during deployment – all real attack attempts = 0.

Virginia honeypot received 54 total SSH connections, with 57 being from external attackers (excluding my test connections). This represents infinite more activity than Stockholm’s 0 attacks.

- Stockholm honeypot received extensive reconnaissance and port scanning (864 packets):

- Both regions provided valuable but different threat intelligence.

IoT as Primary Attack Vector

Observations from this study:

- Telnet attacks exceeded SSH by 13.5x.

- Password attempts focused heavily on IoT device defaults (ubnt, raspberry, orangepi).

- Traditional SSH represented minority of total attack activity.

Learning: This project changed my understanding of modern threats. I expected SSH to be the primary target, but Telnet attacks outnumbered SSH by 13.5x. When I analyzed the passwords being tested (ubnt, raspberry, orangepi), I realized these are default credentials for specific IoT devices – routers, single-board computers, and embedded systems. It became clear that attackers aren’t just looking for servers; they’re hunting for IoT devices that might still have default passwords.

IP Address Complexity

Observations from this project:

- IP 198.235.24.90 (Palo Alto Networks): 0% AbuseIPDB score but 35,000 reports.

- IP 207.154.232.101 (DigitalOcean): ~70% score with 24,846 reports.

- Legitimate cloud providers appear frequently in attack source data.

Learning: I encountered something confusing: an IP with 35,000 abuse reports was marked as “safe” (0% malicious) by AbuseIPDB. How could 35,000 reports equal safe? I discovered it was automatically whitelisted because it belonged to Palo Alto Networks a legitimate security company. But when I checked VirusTotal, it was flagged as malicious by 7 different security vendors. This has taught me an important lesson – Cybersecurity isn’t black and white and different sources can disagree. I understand why security teams need to check multiple databases instead of trusting just one.

Cloud provider Abuse

Observations from this project:

- Attacks originated from legitimate cloud providers (DigitalOcean, Palo Alto Networks, Google Cloud Platform).

- DigitalOcean IP (207.154.232.101) had 24,846 abuse reports on AbuseIPDB and flagged by 10/95 security vendors as malicious on VirusTotal.

- Mix of cloud servers and home internet connections (like Korea Telecom).

Learning: When I saw attacks coming from DigitalOcean and other major cloud providers, I wondered: “Why don’t defenders just block these IPs?” Then I realized the problem – if you block all DigitalOcean IPs or other cloud providers IPs, you would also block thousands of legitimate websites and services. Attackers exploit this and use it to their advantage by renting cloud servers or compromising existing ones. This taught me that it’s not so simple to just “block bad IPs”. Defenders need smarter approaches to analyze behavior from threat actors.

Attack Pattern and Variety

Observations from this project:

- The first attack on the Virginia instance occurred about 47 minutes after deployment.

- AWS CloudWatch logs showed short bursts of activity rather than steady flow of traffic.

- Attack intensity varied noticeably over the 48-hour monitoring period.

Learning: I noticed that attacks didn’t happen constantly at the same rate – there were quiet periods and sudden spikes. The Virginia honeypot’s first attack came within 47 minutes, but activity varied during the 48 hours. This taught me that attackers aren’t just randomly scanning all the time, there are patterns based on which botnets are active, what time of day it is, and current focus of threat campaigns. My 48-hour window on each server deployment only captured a very small snapshot – if I ran this again next week or in a month I might see totally different patterns.

Personal Reflections and Next Steps

What I Would Do Differently

Longer observation period:

- If I did this project again, I would extend it to at least a week or a month. My 48-hour window showed interesting patterns, but I can’t tell if they are typical or just what happened this specific week. More data could reveal if the Virginia vs Stockholm difference is consistent or just a coincidence.

More Regions:

- I would like to deploy a honeypot in different regions, one on each continent, to see how patterns differ globally. This would show if the US vs EU differences observed in this project are unique or if every continent has its own unique patterns. It would also reveal if certain botnets are regional or if they operate globally across all continents.

What Surprised Me The Most

”IoT Dominance”:

- I expected SSH to be the main target. Discovering that Telnet received 13.5x more unique attackers completely changed the perspective of threats. This has taught me that the hacker attacking SSH servers stereotype is outdated – modern botnets are hunting IoT devices or any device that they can compromise.

IP Address Complexity:

- The Palo Alto Networks IP finding really made me question things. How can something have 35,000 abuse reports but still be marked as 0% malicious even when users have reported the IP for suspicious activity. That made me realize I have been thinking about cybersecurity in the wrong way – like it’s just “good guys vs bad guys.” when real-world security is way more difficult and more complex than I understood before this project.

Swedish Context and Real-World Relevance

While this project observed lower automated exploitation in Stockholm, Sweden remains a high-value target for sophisticated attacks. Recent incidents demonstrate this reality:

- 2021: Coop Sweden – 800+ stores affected by ransomware and not being able to process payments.

- 2024: Svenska Kraftnät – Critical energy infrastructure attacked.

- 2024: Miljödata – 1 million+ individuals data leaked.

My honeypot captured automated, opportunistic bot scanning – the “background noise” of the internet. But these real-world Swedish attacks were targeted campaigns against specific organizations. This taught me that there are different types of threats:

- Automated/Opportunistic (what my honeypot detected) – Bots scanning everything, looking for easy targets.

- Targeted/Sophisticated (real Swedish breaches) – Attackers specifically choosing Swedish organizations, government, or infrastructure.

Experience Gained and Skills Practiced

Technical Skills:

- AWS EC2 deployment and management.

- Log analysis and pattern recognition.

- Network monitoring with CloudWatch.

- Threat Intelligence lookup (AbuseIPDB, VirusTotal).

Other Skills Practiced:

- Writing documentation that others could actually follow.

- Being honest about what my data actually shows vs what I hoped it would show.

- Realizing that 48 hours of data isn’t anywhere enough to draw big conclusions.

Security Mindset:

- Always have the attackers mindset in mind when doing something that has to do with security.

- Security has shown to be even more complicated than I knew.

- There is no “one fix solution” that can solve everything.

Technical Appendix

Tools & Technologies

- Cowrie: Medium-interaction SSH/Telnet honeypot.

- Docker: Containerization for consistent deployment.

- tcpdump: Full packet capture for offline analysis.

- AWS CloudWatch: Network and system metrics.

- AbuseIPDB: IP reputation and abuse reporting database.

- VirusTotal: Multi-engine malware and URL scanner.

Ethical Considerations

- No Active Exploitation: Honeypot operated in passive monitoring mode only.

- No Counter-Attacks: Zero offensive actions taken against attackers.

- Data Privacy: No personally identifiable information collected beyond IP addresses.

Conclusion

This was my first time deploying a honeypot, and it taught me more than I expected.

My main finding was that geographic location matters for attack patterns – at least during my 48-hour observation period. Virginia received 57 SSH connection attempts while Stockholm got zero. But I learned an important lesson about making claims from limited data: just because Virginia got more attacks this week doesn’t mean it always will. Attack patterns change all the time and it can depend on which botnets are active, what time of year it is, and many other factors I can’t control or predict from such a short project.

Key things I learned:

- Location matters but it’s complicated – Different regions show different patterns, but I can’t make generalizations from just 48 hours of data.

- Two types of threats exist – Automated bots (what I detected) vs targeted campaigns (what actually hits real organizations). My honeypot only saw the first type.

- IoT devices were the targets – I expected SSH attacks, but Telnet got 13.5x more attention. Botnets seem to be hunting IoT devices, not servers. This completely changed my understanding.

- IP reputation is complicated – That Palo Alto Networks IP (35,000 reports, 0% malicious) broke my simple “good IP vs bad IP” thinking. Security is even more complex than I realized.

Author: Tony Jokiranta

LinkedIn: https://www.linkedin.com/in/tonyjokiranta/

GitHub: https://github.com/TeeDjaay99